It's a tale of two PC markets this week, with Gartner and IDC each releasing their latest reports on worldwide PC shipments, but neither story is particularly positive.

The less-negative news comes from IDC, which found evidence of a flat market. That's right, this was the good news.

IDC reported that worldwide there were some 60.4 million PCs sold in the January-to-March period, amounting to 0.0 percent growth over the year-ago quarter. The reason that's good news is that IDC had previously forecast a drop of 1.5 percent, so flat is better than declining.

IDC also found some green shoots related to Windows 10. In its discussion of the quarter, IDC noted that businesses are moving to Windows 10 at a steady clip.

Speaking of the U.S. market, Neha Mahajan, senior research analyst for Devices & Displays at IDC, stated, "The year kicked off with optimism returning to the U.S. PC market, especially on the notebook side. A likely rise in commercial activity amidst a positive economic environment is expected to further strengthen demand."

Overall, Jay Chou, research manager of IDC's Personal Computing Device Tracker, called the path that PCs are on "resilient" and predicted "modest commercial momentum through 2020."

Even that modest optimism was not evident in an assessment released on the same day by Gartner. Gartner, while calling the market as slightly larger at 61.7 million unit shipments for the quarter, reported a 1.4 percent decline in PC shipments for Q1.

Gartner Principal Analyst Mikako Kitagawa affixed the blame primarily to the Chinese market. "The major contributor to the decline came from China, where unit shipments declined 5.7 percent year over year," Kitagawa said in a statement. "This was driven by China's business market, where some state-owned and large enterprises postponed new purchases or upgrades, awaiting new policies and officials' reassignments after the session of the National People's Congress in early March."

Where IDC saw some modest improvements in the U.S. market, Gartner found red ink there, too, reporting a 2.9 percent decline in U.S. PC shipments from Q1 2017 to Q1 2018. In all, Gartner declared Q1 2018 the 14th consecutive quarter of decline going all the way back to the second quarter of 2012.

Posted by Scott Bekker on April 13, 20180 comments

Mimecast on Tuesday unveiled a revamped partner program newly organized to offer a consistent global experience and three new channel executive appointments.

Major updates from the old Mimecast channel programs for the new Mimecast Global Partner Program include:

- restructured discounts and rewards to better align with motions that attract new clients or deepen existing engagements,

- new training programs, especially to cover Mimecast's integrated cloud suite,

- partner access to account resources like partner account managers, sales engineers and marketing managers, and

- a new partner dashboard.

Julian Martin, a 10-year Mimecast veteran, is now the vice president for global channel and operations.

Martin described the old channel structure as having developed differently in different regions of the world over the last 10 years for Mimecast, which specializes in e-mail and data security products and does a substantial portion of its business with Office 365 customers.

"As we have continued to engage with our rapidly expanding ecosystem of resellers globally, we developed a stronger global strategy," Martin said in a statement. "Simplicity is a core value of the new program and we want to ensure our joint engagements with resellers are easy and rewarding for everyone involved as we service our customers together."

Other new channel appointments announced Tuesday include Shawn Pearson, a former Hewlett-Packard vice president of inside sales, who is now vice president of channel sales for North America at Mimecast; and Rema Lolas, who is taking over as channel director in Australia and New Zealand.

The changes come as Mimecast says it is driving toward a primarily channel-focused business. In the company's Q3 earnings call in February, Chairman and CEO Peter Bauer said the range of business coming in from the channel is approaching 75 percent of new sales. At the time, he telegraphed the investments in channel that Mimecast announced Tuesday, and also made clear where Mimecast sees its biggest opportunities geographically.

"North America and continental Europe are two real focus areas for us [in] building out our channel practice even further over the next year," Bauer said on the investment call.

Posted by Scott Bekker on April 10, 20180 comments

Cryptomining leapfrogged almost all other forms of malware detected in the first quarter of 2018, according to a new security report from Malwarebytes Labs.

"Cryptomining has just gone insane," said Adam Kujawa, director of Malwarebytes, in an interview about the report. "It's all over the place. We've never seen a mass migration to the use of one particular type of threat so fast by so much of the cybercrime community as we have seen with cryptominers."

Malwarebytes on Monday released "Cybercrime tactics and techniques: Q1 2018," the latest in its quarterly series of reports based on telemetry from its business and consumer products.

[Click on image for larger view.] Malwarebytes Labs' top 10 business and consumer malware detections in Q1 2018. (Source: "Cybercrime tactics and techniques: Q1 2018")

[Click on image for larger view.] Malwarebytes Labs' top 10 business and consumer malware detections in Q1 2018. (Source: "Cybercrime tactics and techniques: Q1 2018")

There are legitimate miners that get a user's consent before repurposing all or most of their CPU capacity toward mining for cryptocurrencies. Malwarebytes' report focuses on the other kinds -- malware-based miners that are often delivered via existing malware families and browser-based miners that hijack a victim's processor through drive-by attacks or malicious browser extensions.

The company found that cryptomining detections were way up in the quarter for consumers, with Android miners in particular surging to 40 times more detections this quarter than last. There was also a boom in March in Mac-based detections of malware-based miners, browser extensions and cryptomining apps, the company found.

For now, it's mainly a consumer problem. Business customers saw a 27 percent increase in cryptomining -- a significant jump to be sure, but nowhere near the levels on the consumer side.

This security report is a trailing indicator given that it covers the first three months of the year. Yet the cryptomining spike documented by Malwarebytes is tracking a little behind the price movement on the flagship cryptocurrency, Bitcoin, which had a recent peak in December but has been mostly falling from those highs over the last quarter.

Damages from cryptomining are squishy for businesses to calculate. A drive-by, browser-based attack, for example, can sometimes be stopped by simply shutting down the offending tab. Other types of cryptomining malware can be much more insidious.

How much damage is really done? There's lost productivity for sure, but Kujawa argues the malware delivery vectors that brought the cryptomining malware to systems will represent a lasting problem, even if cryptocurrency values don't rebound quickly and attackers lose interest in the attacks.

"A miner may only cause minimal damage, but any infection that you don't want to be on your system can install different stuff," he said. "The attacker sends a message to the miner: 'Hey install some ransomware for me, worm, go back to the old tricks.' It's like keeping your back door unlocked."

Posted by Scott Bekker on April 09, 20180 comments

Longtime senior Microsoft channel executive Jenni Flinders has landed the channel chief role at VMware Inc.

Flinders' formal title at VMware is vice president, Worldwide Channels, and she reports to Brandon Sweeney, senior vice president, Worldwide Commercial and Channel Sales.

The Palo Alto, Calif.-based enterprise virtualization and cloud giant boasts an ecosystem of 75,000 partners.

Flinders left Microsoft in April 2015 after nearly 15 years with the company. She joined Microsoft in 2000 in marketing and sales roles in South Africa and later ran the midmarket business for Latin America out of Microsoft's Ft. Lauderdale, Fla. office before joining then-Microsoft worldwide channel chief Allison Watson's team in the Worldwide Partner Group as chief of staff.

From 2009 until her departure in 2015, she was channel chief for Microsoft's U.S. partners.

Since 2015, Flinders has been CEO of Daarlandt Partners, a channel strategy consulting practice.

Posted by Scott Bekker on April 05, 20180 comments

In the midst of a pivot from a WAN optimization business to an SD-WAN and cloud networking and application and network performance monitoring, Riverbed Technology is also changing CEOs.

Effective immediately, Paul Mountford, a four-year veteran at Riverbed, is taking over as CEO of the 16-year-old company from co-founder Jerry M. Kennelly, who will retire after he serves in an advisory role for the rest of the month. Mountford's appointment follows an internal and external search by the Riverbed board of directors.

Mountford was previously senior vice president and chief sales officer at Riverbed, which has undergone significant changes to the global sales organization and partner program during his time in the role. He spent 16 years in senior roles at Cisco and was CEO of the Web intelligence company Sentillian.

Riverbed has been both acquiring and organically developing products for software-defined solutions and managing application performance for the last few years. In addition to its business in SteelHead WAN optimization appliances, the company is now emphasizing its SteelCentral performance management platform and control suite and its SteelConnect SD-WAN solution, which includes optimization of Microsoft Azure and Amazon Web Services (AWS).

The company is in the midst of rolling out a new partner program called Riverbed Rise, in which partners earn dividends based on sales or training and that can be converted to rebates, market development funds or training vouchers. Riverbed will fully transition to Rise on Aug. 1.

Posted by Scott Bekker on April 05, 20180 comments

Microsoft got its legal wish.

That wish fulfillment in the form of the CLOUD Act came about in such a surprising fashion that it took Microsoft more than a week to release a full public response.

In a lengthy blog post Tuesday, Microsoft President and Chief Legal Officer Brad Smith admitted that passage of the CLOUD Act on March 23 was a "bit of a shock."

Congress slipped the CLOUD Act into a 2,000-plus-page omnibus bill that President Donald Trump signed after a brief show of protest. Trump had tweeted that he might veto the bill, although his objections to the $1.3 trillion bill that narrowly averted a government shutdown involved other aspects of the legislation, such as the level of border-wall spending and a lack of action on DACA. The CLOUD Act portion did not come up in most news coverage of the omnibus bill.

Microsoft has been lobbying hard for some time for the CLOUD Act, which stands for Clarifying Lawful Overseas Use of Data Act. The company urged the Supreme Court during oral arguments in the Microsoft warrant case in late February to wait for the CLOUD Act.

"This Court's job is to defer, to defer to Congress to take the path that is least likely to create international tensions. And if you try to tinker with this, without the tools that -- that only Congress has, you are as likely to break the cloud as you are to fix it," said Microsoft lawyer E. Joshua Roskenkranz in his closing statement in the case, which involved U.S. law enforcement efforts to obtain customer data stored by Microsoft in a datacenter in Ireland.

For his part, Michael R. Dreeben, deputy solicitor general for the U.S. Department of Justice, was probably shocked by the timing, as well. Dreeben had argued that the high court, which is expected to rule on the case in June, shouldn't wait for Congress. "As to the question about the CLOUD Act, as it's called, it has been introduced. It's not been marked up by any committee. It has not been voted on by any committee. And it certainly has not yet been enacted into law," Dreeben said just a month before the act passed.

While the effect of the new law on the high court's ruling is hard to predict, Microsoft's Smith blogged this week that this update to the legal code written in the context of the existence of cloud computing will help U.S. cloud providers like Microsoft balance the requirements of cooperating with legitimate law enforcement requests while protecting the privacy rights of international customers.

The road forward from the passage of the law until it starts yielding evidence for U.S.-based law enforcement efforts could be somewhat long. The act calls on the executive branch to establish reciprocal international agreements allowing law enforcement in both countries to access data in each other's countries. Yet as a first step, the administration must also establish that each country with which it creates an agreement protects privacy and human rights. Congress also has 180 days to review the agreements.

Smith's interpretation is that the law leaves room for cloud providers to challenge law enforcement requests during the interim period. "The CLOUD Act both creates the foundation for a new generation of international agreements and preserves rights of cloud service providers like Microsoft to protect privacy rights until such agreements are in place," Smith said.

Unstated in Smith's blog entry is the sigh of relief. Right now, U.S.-based technology companies dominate the global cloud computing infrastructure market. But there is no iron law that this state of affairs must continue. The Edward Snowden revelations of 2013 marked a huge challenge to international businesses' and governments' trust in U.S.-based companies ability and willingness to protect their data from the U.S. government. Microsoft, Google and Amazon have been looking over their shoulders for potential new international competitors and contemplating a potentially fragmented global market where U.S.-based cloud providers could be shut out of some countries over data sovereignty and citizen privacy concerns.

Smith laid out that line of thinking in a February post about the Supreme Court case. "U.S. companies are leaders in cloud computing. This leadership is based on trust. If customers around the world believe that the U.S. Government has the power to unilaterally reach in to datacenters operated by American companies, without reference or notification to their own government, they won't trust this technology," Smith wrote.

The passage of the CLOUD Act gives Microsoft and its channel partners a much stronger privacy story for international customers and an opportunity, along with other U.S.-based cloud providers, to continue leading the global charge for cloud computing.

Posted by Scott Bekker on April 04, 20180 comments

Microsoft launched a new program on Monday to potentially train tens of thousands of people in artificial intelligence skills and concepts.

The Microsoft Professional Program for Artificial Intelligence will consist of 10 parts, each of which is supposed to take eight to 16 hours to complete. Attendees can either audit the courses or pay in order to get a certificate of completion.

In a feature-style article to announce the new track, Microsoft framed the program as a massive online open course (MOOC) that grew out of Microsoft's internal AI training initiatives, including one project-based, semester-style program called AI School 611.

"The program provides job-ready skills and real-world experience to engineers and others who are looking to improve their skills in AI and data science through a series of online courses that feature hands-on labs and expert instructors," Microsoft noted in the description of the new Microsoft Worldwide Learning Group program.

The nine courses include an intro to AI, using Python to work with data, using math and statistics techniques, considering ethics for AI, planning and conducting a data study, building machine learning tools, building reinforcement learning models, and developing applied AI solutions. The applied AI section has three options -- natural-language processing, speech-recognition systems, or computer vision and image analysis.

The track ends with a final project called the Microsoft Professional Capstone: Artificial Intelligence. Details of the capstone project are coming soon, according to Microsoft's Web site explaining the program.

Microsoft first unveiled the idea of broad-based courses in 2016 under the name Microsoft Professional Degree, and later renamed the idea as the Microsoft Professional Program.

The first track under the program was Data Science. Microsoft currently also offers Big Data, Front-End Web Development, Cloud Administration, DevOps, IT Support and Entry Level Software Development.

Posted by Scott Bekker on April 02, 20180 comments

Network managers need to be on the lookout for password-spray attacks, according to warnings from the FBI and U.S. Department of Homeland Security.

In a password-spray attack, a hacker tests a single password against multiple user accounts at an organization. The method often involves weak passwords, such as Winter2018 or Password123!, and can be an effective hacking technique against organizations that are using single sign-on (SSO) and federated authentication protocols, but that haven't deployed multifactor authentication.

By hitting multiple accounts, the method can test a lot of user names without triggering account-lockout protections that kick in when a single user account gets hit with multiple password attempts in a row.

"According to information derived from FBI investigations, malicious cyber actors are increasingly using a style of brute force attack known as password spraying against organizations in the United States and abroad," the agencies declared in a US-CERT technical alert issued Tuesday evening.

Prompting the alert was the disclosure last Friday of a federal indictment against nine Iranian nationals associated with the Mabna Institute, a private Iran-based company accused of hacking on behalf of the Iranian state. The main focus of that indictment was a massive, four-year spear-phishing campaign to steal credentials from thousands of university professors whose publications could allegedly advance Iranian research interests.

Also caught up in the alleged Iranian effort were 36 private companies in the United States, 11 companies in Europe and multiple U.S. government agencies and non-government organizations, and the method of attack for those organizations was password spraying.

According to the indictment:

In order to compromise accounts of private sector victims, members of the conspiracy used a technique known as 'password spraying,' whereby they first collected lists of names and email accounts associated with the intended victim company through open source Internet searches. Then, they attempted to gain access to those accounts with commonly-used passwords, such as frequently used default passwords, in order to attempt to obtain unauthorized access to as many accounts as possible.

Once they obtained access to the victim accounts, members of the conspiracy, among other things, exfiltrated entire email mailboxes from the victims. In addition, in many cases, the defendants established automated forwarding rules for compromised accounts that would prospectively forward new outgoing and incoming email messages from the compromised accounts to email accounts controlled by the conspiracy.

The US-CERT technical alert refers to the indictment as having been handed up in February, which could explain Microsoft's detailed guidance for deterring password-spray attacks in a high-profile blog post on March 5. In that post, Alex Simons, director of program management for the Microsoft Identity Division, called password spray "a common attack which has become MUCH more frequent recently," and declared, "Password spray is a serious threat to every service on the Internet that uses passwords." The new government alert linked back to the March 5 Microsoft post as a mitigation resource.

While the Mabna-related password spraying clearly has a lot to do with the new alert, US-CERT warned that others are currently using the attack. "The techniques and activity described herein, while characteristic of Mabna actors, are not limited solely to use by this group," the alert stated.

This is US-CERT's third technical alert this year. Previous alerts warned about the Meltdown and Spectre side-channel vulnerability and Russian government cyberactivity targeting critical U.S. infrastructure.

Posted by Scott Bekker on March 28, 20180 comments

Patches that were released in January to protect Windows 7 from the Meltdown flaw may have opened an even worse can of worms for the OS, according to one security researcher.

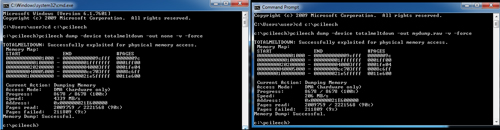

Ulf Frisk, a security researcher who specializes in direct memory access (DMA) attacks, described the problem this week in a blog post called "Total Meltdown?"

The January patch was intended to address the Meltdown flaw in Intel, IBM POWER and ARM-based processors that emerged in January and theoretically allows a rogue process to read all memory on a system.

"[The patch] stopped Meltdown but opened up a vulnerability way worse...It allowed any process to read the complete memory contents at gigabytes per second, oh -- it was possible to write to arbitrary memory as well," wrote Frisk, who is the author of the PCILeech memory access attack toolkit, and who described himself in a DEFCON 24 presentation in 2016 as a penetration tester specializing in online banking security and working in Stockholm, Sweden.

[Click on image for larger view.] Using his PCILeech tool, researcher Ulf Frisk demonstrates the speed of memory dumping from Windows 7 with the January patches at 4GB/s (left). The dump speed is slightly slower when dumping the memory to disk (right). (Image source: Ulf Frisk)

[Click on image for larger view.] Using his PCILeech tool, researcher Ulf Frisk demonstrates the speed of memory dumping from Windows 7 with the January patches at 4GB/s (left). The dump speed is slightly slower when dumping the memory to disk (right). (Image source: Ulf Frisk)

"No fancy exploits were needed. Windows 7 already did the hard work of mapping in the required memory into every running process. Exploitation was just a matter of read and write to already mapped in-process virtual memory. No fancy APIs or syscalls required -- just standard read and write," Frisk said.

The flaw does not affect Windows 10 or Windows 8, according to Frisk.

The problem appears to have been introduced by the Windows 7 patches released in January, during the industrywide scramble to address the Meltdown and related Spectre flaws whose existence was revealed slightly ahead of schedule. Some of the first-generation patches caused reboot and slowdown issues, among other problems.

Frisk said the subsequent March patch for Windows 7 fixed the flaw, and he discovered the problem after the March patch was released.

Posted by Scott Bekker on March 28, 20180 comments

Could Microsoft's cloud strategy, partner channel and customer base help it vault ahead of its tech rivals to become the first trillion-dollar company?

Apple, Amazon and Alphabet (Google) have been front-runners in investor speculation about which company could be first to reach the psychological milestone of a trillion-dollar market capitalization.

Attention around the question peaked near the market's recent top in January and has settled considerably as stocks have fallen since. In addition, Facebook, which had been a little further back in the market cap sweepstakes, has completely worked its way out of the conversation in the midst of its recent storm of controversy over data privacy that has severely affected the stock price.

An analyst at Morgan Stanley revived the tech market cap question on Monday with a high-profile note to clients predicting Microsoft will reach a $1 trillion market cap within 12 months.

"Strong positioning for ramping public cloud adoption, large distribution channels and installed customer base, and improving margins support a path to $50 billion in EBIT and a $1 trillion market cap for MSFT," said Morgan Stanley's Keith Weiss in a note quoted by CNBC.

Shares of MSFT rose more than 5.5 percent after Morgan Stanley's note.

Here are the companies' relative market caps, according to Yahoo! Finance:

- Apple: $854 billion

- Amazon: $734 billion

- Alphabet (Google): $712 billion

- Microsoft: $710 billion

- Facebook: $453 billion

Posted by Scott Bekker on March 26, 20180 comments

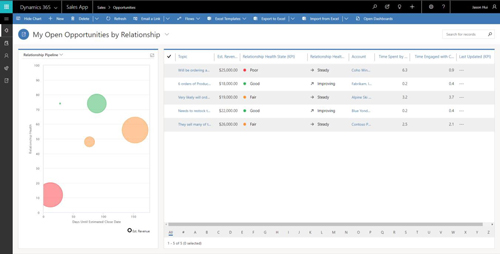

Microsoft unveiled details and highlights of the upcoming Spring '18 release of Microsoft Dynamics 365 on Wednesday at Business Forward Amsterdam.

"We're unleashing a wave of innovation across the entire product line with hundreds of new capabilities and features in three core areas: new business applications; new intelligent capabilities infused throughout; and transformational new application platform capabilities," said James Phillips, corporate vice president of the Microsoft Business Applications Group, in a blog post unveiling the changes.

Dynamics 365 for Marketing

One hotly anticipated component that will be generally available on April 2, when many of the capabilities of the spring release are set to begin rolling out, is the overdue Dynamics 365 for Marketing application.

"This is a new marketing automation application for companies that need more than basic email marketing at the front end of a sales cycle to turn prospects into relationships," Phillips said of the component, which was originally announced in October 2016 and was supposed to ship a year ago.

Microsoft has previously described the forthcoming module as aimed at smaller businesses and steers larger companies to the Adobe Marketing Cloud suite, which is already available to Dynamics 365 users. A public preview of Dynamics 365 for Marketing has been available since February.

Dynamics 365 for Sales Professionals

Along the same lines of a more basic experience for customers with less intensive needs, Microsoft is also rolling out a new module called Dynamics 365 for Sales Professionals on April 2.

Phillips described the Sales Professional version as a streamlined version of Dynamics 365 for Sales, with an emphasis in the new version on core salesforce automation capabilities. "From opportunity management to sales planning and performance management, the solution optimizes sales processes and productivity," Phillips said.

New Intelligence Capabilities

The spring release is also productizing the years of work and millions invested in artificial intelligence research, Phillips said. "These investments are infused throughout Dynamics 365 and are now available with the spring 2018 release," he said.

The highest-profile examples are in a feature set Microsoft is calling "embedded intelligence" in the Dynamics 365 for Sales application. Microsoft previously referred to the feature set as Relationship Insights.

The idea is that embedded intelligence leverages information created in the sales process to recommend actions. The initial spring release on April 2 will include a relationship assistant, auto capture and e-mail engagement. Relationship Assistant analyzes customer interactions in Dynamics 365, Exchange and other sources to generate action cards that suggest next steps. Auto-Capture takes a salesperson's Outlook messages and appointments that relate to Dynamics 365 deals and offers to track them. E-mail Engagement tracks whether recipients open messages and attachments, click through links or reply to messages, and allow scheduling e-mails and reminders.

[Click on image for larger view.] As part of the Spring '18 release of Dynamics 365, Microsoft is readying a public preview of artificial intelligence technologies to rate the health of a salesperson's relationship with potential customers in the pipeline. (Source: Microsoft)

[Click on image for larger view.] As part of the Spring '18 release of Dynamics 365, Microsoft is readying a public preview of artificial intelligence technologies to rate the health of a salesperson's relationship with potential customers in the pipeline. (Source: Microsoft)

Common Data Service for Analytics and Apps

The launch will also include previews for a new set of data integration services built on the common data model -- one for Power BI and one for PowerApps.

The Common Data Service (CDS) represents another Microsoft run at the age-old problem of integrating data from multiple sources and trying to wrangle actionable business intelligence out of the combined data.

"The CDS for Analytics capability will reduce the complexity of driving business analytics across data from business apps and other sources," said Arun Ulag, Microsoft general manager of Intelligence Platform Engineering, in a blog post. Common Data Service for Analytics works with Power BI.

Ulag said CDS for Analytics expands Power BI with the introduction of an extensible business application schema. "Pre-built connectors for common data sources, including Dynamics 365, Salesforce and others from Power BI's extensive catalog, will be available to help organizations access data from Microsoft and third parties. And organizations will be able to add their own data," he said.

One of those pre-built Power BI apps, designed for Dynamics 365 for Sales, is supposed to enter the public preview stage during the second quarter of this year. Called Power BI for Sales Insights, the app will provide relationship analytics. The purpose is to help salespeople manage pipeline by using AI to rate the health of customer relationships with techniques including sentiment analysis. Another CDS for Analytics-based Power BI app coming to public preview in the second quarter is called Power BI for Service Insights.

On the Power Apps side, Microsoft is unveiling a preview of Common Data Service for Apps on April 2. When it ships, it will come with PowerApps and offer capabilities for modeling business solutions within platforms like Dynamics 365 and Office 365.

Others of the hundreds of new features in the spring release aim to unify Microsoft's business applications and improve integrations with Microsoft technologies, including Outlook, Teams, SharePoint, Stream, Flow, Azure, LinkedIn, Office 365 and Bing.

Microsoft will be providing more detail on March 28 in a Business Applications Virtual Spring Launch Event.

Posted by Scott Bekker on March 21, 20180 comments

Intel is officially declaring that it's done with the massive effort to provide software updates to protect against Spectre and Meltdown for all the products it has released in the last five years.

The chip maker also says it has redesigned the processors being released later this year to offer additional protections.

"We have now released microcode updates for 100 percent of Intel products launched in the past five years that require protection against the side-channel method vulnerabilities discovered by Google," said Intel CEO Brian Krzanich in a written statement Thursday.

The declaration would bring to a close a promise Krzanich made in a keynote at CES in the second week of January just after news broke that Intel and its OEM and software partners were working feverishly to fix the flaws, which represented a serious theoretical threat but did not seem to have been exploited in the wild.

At the time, Krzanich said Intel expected to issue fixes for 90 percent of its processors within a week and fixes for all of them by the end of January. However, complications arose involving bricked systems, server performance issues and reboot problems.

While Intel is done working on the microcode, that doesn't necessarily mean all systems can be patched yet. Because customers get the fixes through their OEMs rather than from Intel, it could still take time for some of Intel's OEMs to test and approve the patches on their supported systems.

At the same time, Intel redesigned forthcoming processors shipping later this year to address two of the three variants of the Spectre/Meltdown family identified by Google Project Zero's reporting.

"While Variant 1 will continue to be addressed via software mitigations, we are making changes to our hardware design to further address the other two. We have redesigned parts of the processor to introduce new levels of protection through partitioning that will protect against both Variants 2 and 3," Krzanich said Thursday. "These changes will begin with our next-generation Intel Xeon Scalable processors (code-named Cascade Lake) as well as 8th Generation Intel Core processors expected to ship in the second half of 2018."

Posted by Scott Bekker on March 15, 20180 comments