Acumatica, the Bellevue, Wash.-based company run by former Microsoft channel chief Jon Roskill, on Monday announced a $25 million funding round that Roskill believes will accelerate Acumatica's cloud ERP business.

Leading the Series C preferred round is Accel-KKR, a Menlo Park, Calif.-based technology-focused investment firm with $4.3 billion in capital commitments. AKKR is joined in the round by existing investors.

"For Acumatica, what this means is that we're now bringing a true Grade A, growth equity firm into the Acumatica fold. They're going to take a board seat as part of this," said Roskill, CEO of Acumatica since 2014, in an interview. "One of the things that's exciting for us about this is that AKKR doesn't just bring money to the table, but they've got significant resources that we can leverage, as well, whether it's around strategy and planning; recruiting and HR; and, in particular, expansion-oriented resources."

Acumatica's sweet spot is ERP customers with revenues between about $10 million and $500 million looking to move to the cloud. Roskill said that according to analysts at Gartner and IDC, only 18 to 20 percent of companies in that segment have moved to the cloud, but most of them are ready to go to the cloud very soon.

Given Acumatica's 100 percent channel focus, the investments the company has planned will all affect Acumatica's 350 North American partners, as well as potentially affect Microsoft partners looking for a cloud ERP solution, Roskill said. "It's all going to go into building better products, accelerating product development and accelerating go to market," Roskill said.

Expect to see more focus on verticals, where Acumatica over the last 18 months has rolled out editions focused on field service, commerce, manufacturing and construction. "[With] this money, we're going to accelerate our efforts into some of these verticals, and I think you can expect to see us go after a couple others over the coming years," Roskill said.

A strong Microsoft partner itself, Acumatica runs on a Microsoft infrastructure stack and has integrations and add-ins with Office 365, Power BI and LinkedIn. Yet that relationship doesn't stop Acumatica from going after Microsoft Dynamics partners.

Customers are going to the cloud "with or without you," Roskill said. "You've got to get the skills, and you've got to get the product, and Acumatica is certainly here to help those that are interested," said Roskill after arguing that the Acumatica code base is substantially more modern than what Microsoft has put in the cloud so far on the ERP side for midmarket customers.

Posted by Scott Bekker on June 18, 20180 comments

When it comes to broad, new business opportunities for Microsoft partners, security stands apart.

No matter how specialized a partner may be, security concerns are on the rise and they cut across every vertical and niche. Between the need for customers to up their security game and a global skills shortage, there are plenty of ways for partners to rush in.

In a recent online briefing for U.S. partners, Microsoft Vice President for Enterprise and Security Ann Johnson singled out security operations centers (SOCs) as a huge emerging opportunity.

First, Johnson, a veteran of senior posts at Qualys Inc. and RSA Security LLC before joining Microsoft, made a case for managed security services.

"All of our customers are saying, 'Look, we don't have the resources to actually drive a fully robust security program,' especially when you get into small to midsize business. They just can't hire the security professionals. If I were a partner today, I would build a managed security service offering. Of course, I would build it on top of Microsoft tooling, but I would build a managed service security offering so you can supplement your customers' capabilities, you can supplement your customers' skills and you can do it at scale," Johnson said.

Within that broader area, Johnson zeroed in on the need for managed SOCs. An SOC can be defined as a team or a facility dedicated to preventing, detecting, assessing and responding to security incidents. Regulatory compliance requirements are a major driver for SOCs.

"I would focus on managed security operations centers, because, candidly, managed SOCs are places where customers need a lot of help and you can get a lot of breadth across their environment by focusing on that area," she said. "You can also drive a lot of value to your customers by actually helping them build out their SOC and run their SOC, and customers are asking us today for that type of help. So if I were a partner, I would focus on building managed SOC services."

Johnson enumerated various tiers of SOC-related opportunities, from architecting to building to automating to running the facilities.

Johnson and Microsoft are not the only ones pointing to the opportunity. In a press Q&A in October, Gartner analyst Siddharth Deshpande made a strong case for managed SOC services.

"Building a SOC -- or generally creating some form of internal security operations capabilities -- is a costly and time-consuming effort that requires ongoing attention in order to be effective. Indeed, a great number of organizations (including some large organizations) choose not to have a SOC. Instead, they choose other security monitoring options, such as engaging a managed security service provider (MSSP)," Deshpande said.

Posted by Scott Bekker on June 14, 20180 comments

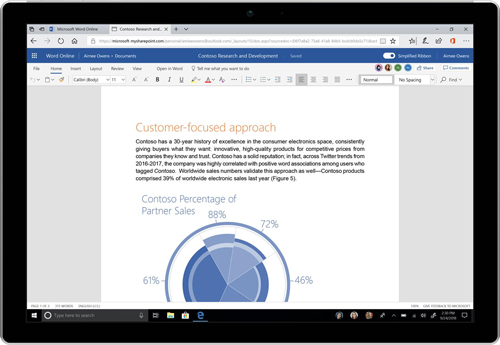

Microsoft on Wednesday unveiled big feature changes for the Office user experience, including a simplified ribbon, new colors and icons, and a search overhaul, about a month and a half behind Google's release of major updates for Gmail.

The changes will roll out in stages over the next few months, starting with Web versions, and will be exclusive to Office.com and Office 365. Apparently remembering the significant user backlash that accompanied the original rollout of the Office ribbon, Microsoft is taking care to present the changes as a work-in-progress that will be tested with initial user groups and modified as necessary, rather than blasted out to the billion-plus monthly users of Office.

"We plan on carefully monitoring usage and feedback as the changes roll out, and we'll update our designs as we learn more," wrote Jared Spataro, corporate vice president for Office and Windows Marketing, in a blog post announcing the updates.

Additionally, user control over implementing the changes is a key design principle. "We want to give users control, allowing them to toggle significant changes on and off," Spataro said. In fact, there is no current schedule to push the ribbon changes to Word, Excel and PowerPoint for Windows. "They're the preferred experience for users who want to get the most from our apps. Users have a lot of 'muscle memory' built around these versions, so we plan on being especially careful with changes that could disrupt their work," he wrote.

The ribbon takes up less screen real estate and is more context-driven, with Microsoft trying to anticipate what a user wants to do. An underline appears below the main menu tabs, with options appearing underneath the tab in a single line that uses new colors and icons intended to provide more contrast and more intuitive navigation.

[Click on image for larger view.] (Source: Microsoft)

[Click on image for larger view.] (Source: Microsoft)

In a video demonstration, Jon Friedman, chief designer for Office at Microsoft, opened a Word document from the Office 365 interface to demonstrate a new speediness to the process. "Office is rebuilt on a modern platform to be faster than ever," Friedman said.

The new search functionality includes a more prominent search bar and brings in what Microsoft calls "zero query search," in which recommendations are presented based on Microsoft's predictive algorithms and data about the user's behavior and working relationships.

Microsoft shared several milestones in its timeline for rolling out the new Office features:

- All commercial users of Office.com, SharePoint Online and the Outlook mobile app have immediate access to the search changes.

- Select consumer users of Web versions of Word will see the simplified ribbon this week.

- The color and icon changes will come to the Web versions of Word shortly.

- Select Insiders using Windows versions of Word, Excel and PowerPoint will see color and icon changes later this month.

- Color and icon changes will come to Outlook for Windows in July.

- Select Insiders will see the simplified ribbon on Outlook for Windows in July.

- In August, commercial users of Outlook on the Web will see the search changes.

- Also in August, Outlook for Mac users will get the color and icon changes.

Posted by Scott Bekker on June 13, 20180 comments

Microsoft highlighted partners doing work with artificial intelligence (AI), Big Data and open source among other categories this year in its annual Partner of the Year awards announced this month.

"These companies are bringing cutting-edge solutions to complex business challenges and providing digital transformation opportunities for their customers," said Gavriella Schuster, corporate vice president for One Commercial Partner at Microsoft, in a statement announcing the 39 category winners, as well as country-level winners.

In addition to the full list (below), Microsoft highlighted a dozen partners doing work in categories and verticals that are strategic for the company in a video. Those categories and winners included Artificial Intelligence, Insight; Big Data Analytics, Cognizant Technology Solutions; Open Source Data & AI, Cloudera; DevOps, Xpirit Netherlands; Modern Workplace Transformation, Dimension Data; and Azure Compete, GTPLUS.

The verticals and winners included Alliance Global Commercial ISV, AT&T; Microsoft CityNext, Bentley Systems; Financial Services, Vector Risk; Health, Solidsoft Reply; Learning, Digicomp Academy AG; and Partner for Social Impact, ProServeIT Corp.

Winners will be recognized next month at Microsoft Inspire in Las Vegas.

Global Category Winners

Alliance Global Commercial ISV Partner of the Year

Alliance SI Partner of the Year

Application Innovation Partner of the Year

Artificial Intelligence Partner of the Year

- Winner: Insight

- Finalist: KPMG Consulting Co. Ltd.

- Finalist: eSmart Systems

- Finalist: RedPoint Global Inc.

Azure Compete Partner of the Year

- Winner: GTPLUS

- Finalist: Wragby Business Solutions and Technologies Ltd.

- Finalist: Convergent Computing (CCO)

- Finalist: Infront Consulting Group

Big Data Analytics Partner of the Year

Customer Experience Partner of the Year

- Winner: Content and Code

- Finalist: Quadrasystems.net India Private Ltd.

- Finalist: Insight

- Finalist: Qorus Software

Data Estate Modernization Partner of the Year

- Winner: pmOne AG

- Finalist: Northdoor plc

- Finalist: Cognizant Technology Solutions

- Finalist: Datometry

Datacenter Transformation Partner of the Year

- Winner: Ensono

- Finalist: 10th Magnitude

- Finalist: Hanu Software Inc.

- Finalist: Rackspace

DevOps Partner of the Year

- Winner: Xpirit Netherlands

- Finalist: Canarys Automations Private Ltd.

- Finalist: InCycle Software

- Finalist: Nebbia Technology

Dynamics 365 for Field Service Partner of the Year

- Winner: Velrada

- Finalist: eCraft Oy Ab

- Finalist: Hitachi Solutions Ltd.

- Finalist: DXC Eclipse

Dynamics 365 for Talent Partner of the Year

Dynamics Customer Service Partner of the Year

- Winner: eBECS

- Finalist: Fusion5

- Finalist: Accenture/Avanade

- Finalist: PowerObjects, an HCL Technologies company

Dynamics for Finance and Operations Partner of the Year

- Winner: Accenture/Avanade

- Finalist: Infosys Ltd.

- Finalist: AKA Enterprise Solutions (Formerly InterDyn AKA)

- Finalist: Hitachi Solutions

Dynamics Sales Partner of the Year

- Winner: NuSoft

- Finalist: Cognizant Technologies

- Finalist: KORUS Consulting

- Finalist: Ecuity Edge

Education Partner of the Year

Financial Services Partner of the Year

- Winner: Vector Risk

- Finalist: V.R.P. Veri Raporlama Programlama Bilisim Yazilim ve Danismanlik Hizmetleri Ticaret A.S.

- Finalist: Finastra

- Finalist: Insight

Government Partner of the Year

- Winner: Axon Enterprises Inc.

- Finalist: Black Marble

- Finalist: Pythagoras Communications Ltd.

- Finalist: EY

Health Partner of the Year

- Winner: Solidsoft Reply

- Finalist: KPMG LLP

- Finalist: DXC Eclipse

- Finalist: KenSci

Indirect Provider Partner of the Year

- Winner: Tech Data

- Finalist: Westcoast Ltd.

- Finalist: Ingram Micro

Intelligent Communications Partner of the Year

- Winner: Sada Systems

- Finalist: Communicativ

- Finalist: bluesource

- Finalist: Modality Systems Ltd.

Internet of Things Partner of the Year

Learning Partner of the Year

Manufacturing Partner of the Year

- Winner: ICONICS

- Finalist: ABB Group

- Finalist: Icertis Inc.

- Finalist: PROS Inc.

Media & Communications Partner of the Year

- Winner: Advvy

- Finalist: NV Interactive

- Finalist: Avid

- Finalist: Hewlett-Packard Enterprise LLC

Microsoft CityNext Partner of the Year

- Winner: Bentley Systems

- Finalist: Meemim Inc.

- Finalist: Black Marble

- Finalist: Cubic Transportation Systems

Modern Desktop (formerly Powered Device) of the Year

- Winner: Insight

- Finalist: AMTRA Solutions

- Finalist: Dell

- Finalist: DXC

Modern Workplace Transformation Partner of the Year

- Winner: Dimension Data

- Finalist: Logicalis

- Finalist: Sulava Oy

- Finalist: Otsuka Corp.

Open Source Applications & Infrastructure on Azure Partner of the Year

- Winner: 10th Magnitude

- Finalist: Nordcloud

- Finalist: 4ward

- Finalist: SNP Technologies Inc.

Open Source Data & AI Partner of the Year

- Winner: Cloudera

- Finalist: PT Mitra Integrasi Informatika

- Finalist: KORUS Consulting

- Finalist: Application Consulting Training Solutions

Partner for Social Impact Partner of the Year

Partner Seller Excellence in Technology, Sales and/or Licensing Partner of the Year

- Winner: Erik Moll, COMPAREX Canada

- Finalist: Michael Jonsson, Avanade

- Finalist: Andrew Mackay, Teambase

- Finalist: Mark Pierce, New Signature

Platform Partner of the Year

- Winner: Provance

- Finalist: Anywhere.24 GmbH

- Finalist: (Joint submission) PROS Inc., Icertis and VeriPark

- Finalist: SAGlobal

Power BI Partner of the Year

- Winner: Slalom

- Finalist: Truenorth Corp.

- Finalist: IT-Logix AG

- Finalist: Teambase

Project and Portfolio Management Partner of the Year

- Winner: CPS

- Finalist: Prosperi

- Finalist: Projectum

- Finalist: Sensei Project Solutions

Retail Partner of the Year

- Winner: Teambase

- Finalist: Blue Yonder GmbH

- Finalist: Synerise S.A.

- Finalist: Tallan Inc.

SAP on Azure Partner of the Year

- Winner: Accenture/Avanade

- Finalist: Infosys Ltd.

- Finalist: CoreToEdge

- Finalist: Brillio

Security and Compliance Partner of the Year

Teamwork Partner of the Year

- Winner: Adopt & Embrace

- Finalist: Quadrasystems.net India Private Ltd.

- Finalist: Rapid Circle

- Finalist: Content and Code

Country Winners

Albania: BTS Ltd.

Argentina: Softtek

Armenia: Dom-Daniel

Australia: rhipe

Austria: Nagarro GmbH

Azerbaijan: Respect Solutions

Bahrain: Computer World W.L.L.

Bangladesh: Aamra Technologies Limited

Belgium: COMPUTERLAND

Bermuda: Fireminds

Bolivia: SoftwareOne

Brazil: Westcon Brasil

Brunei: Concepts Technologies

Bulgaria: COMPAREX Bulgaria OOD

Cambodia: SL International Company Ltd.

Canada: Long View

Cayman Islands: SALT Technology Group

Chile: Kudaw S.A.

China: Shanghai Nanang Wanbang Software Technology Co. Ltd.

Colombia: Bizagi

Costa Rica: Conzultek

Côte d'Ivoire: INOVA

Croatia: Recro d.d.

Curaçao: Inova Solutions

Cyprus: COMIT Solutions Ltd.

Czech Republic: ARTEX informacní systémy s.r.o.

Denmark: ProActive A/S

Dominican Republic: Solvex Dominicana SRL

Ecuador: BUSINESS IT

Egypt: HITS Technologies

El Salvador: Corporación Orbital

Estonia: Primend

Finland: Onrego Oy

France: Capgemini Sogeti

Georgia: LLC SYNTAX

Germany: pmOne AG

Greece: InTTrust SA

Guatemala: Sega

Honduras: Sega

Hong Kong SAR: Superhub Limited

Iceland: Advania

India: Embee Software Pvt. Ltd.

Indonesia: Kreatif Dinamika Intgrasi

Ireland: Codec.ie

Israel: Aztek Technologies

Italy: Avanade Italy

Jamaica: Inova Solutions

Japan: Japan Business Systems Inc.

Jordan: Specialized Technical Services

Kazakhstan: ASPEX

Kenya: Cloud Productivity Solutions Limited

Korea: GTPLUS

Kuwait: EBLA Computer Consultancy

Laos: Top Value Service Sole Co. Ltd.

Latvia: SQUALIO Cloud Consulting

Lebanon: Comprehensive Computing Innovations

Lithuania: Telia

Luxembourg: (Joint submission) ELGON SA and AINOS SA

Malaysia: Enfrasys Consulting

Malta: Computime Ltd.

Martinique: INFODOM Martinique

Mauritius: MC3

Mexico: Readymind

Morocco: CASANET

Myanmar: Access Spectrum Company Limited

Namibia: Salt Essential IT

Nepal: Thakral One Nepal Pvt. Ltd.

Netherlands: InSpark

New Zealand: Intergen

Nicaragua: Sega Nicaragua S.A.

Nigeria: Ha-Shem Network Services Ltd.

Oman: KalSoft LLC

Pakistan: Systems Limited

Panama: IT Quest Solutions

Peru: G&S Gestión y Sistemas SAC

Philippines: Tech One Global Phils. Inc.

Poland: Synerise S.A.

Portugal: Armis

Puerto Rico: Truenorth Corporation

Qatar: Information & Communication Technology W.L.L.

Romania: SC Avaelgo SRL

Russia: Bright Box

Saudi Arabia: eSense Software

Senegal: FTF

Serbia: Informatika a.d.

Singapore: NCS Pte. Ltd.

Slovenia: Stroka product d.o.o.

South Africa: Britehouse, a Division of Dimension Data

Spain: Prodware Spain S.A.

Sri Lanka: H One Pvt. Ltd.

Sweden: Haldor AB

Switzerland: Codit

Taiwan: Acer e-Enabling Service Business Inc.

Thailand: MVERGE Co. Ltd.

Turkey: Makronet Bilgi Teknolojileri

Ukraine: SMART business

United Arab Emirates: Teambase

United Kingdom: Kainos

United States: Icertis Inc.

Uruguay: Arkano Software

Venezuela: CONSEIN C.A.

Vietnam: CMC System Integration

Posted by Scott Bekker on June 11, 20180 comments

Microsoft envisions partners helping to drive a major push of the GitHub software development and version control platform into the enterprise.

Microsoft announced a deal on Monday to acquire GitHub for $7.5 billion in stock. The transaction has been approved by both companies' boards and is expected to close before the end of the year.

Microsoft CEO Satya Nadella identified enterprise usage as one of three major opportunities around GitHub in a blog about the deal, and called out partners as having a role.

"We will accelerate enterprise developers' use of GitHub, with our direct sales and partner channels and access to Microsoft's global cloud infrastructure and services," Nadella said.

Of the other two opportunities, one involved bringing Microsoft developer tools and services to GitHub's huge community of developers, many of whom primarily use open source tools. The other opportunity is deepening Microsoft's engagement with developers at every stage of the development lifecycle, Nadella said, "from ideation to collaboration to deployment to the cloud."

The GitHub community currently includes 28 million developers with more than 85 million code repositories. Microsoft regularly boasts of being the most active organization on GitHub. At the Microsoft Build conference in May, Microsoft said it had the most open source project contributors on the platform in 2016. With the acquisition announcement, Nadella claimed that Microsoft's 2 million "commits," or updates made to projects, make it the most active organization on GitHub.

Nadella's comments suggest that enterprise organizations, with development processes that pre-date the 10-year-old GitHub model, could face some adjustments in processes like version control that become integrated into Visual Studio and other parts of the Microsoft developer platforms.

Meanwhile, Nadella and GitHub Co-Founder Chris Wanstrath sought to assure GitHub's massive user community that big business-focused Microsoft wouldn't wreck the open source development platform.

"Most importantly, we recognize the responsibility we take on with this agreement. We are committed to being stewards of the GitHub community, which will retain its developer-first ethos, operate independently and remain an open platform," Nadella promised. "Developers will continue to be able to use the programming languages, tools and operating systems of their choice for their projects -- and will still be able to deploy their code on any cloud and any device."

In his own blog entry, Wanstrath pointed to Microsoft's ongoing engagement with the open source community and its handling of recent acquisitions as reasons to trust Microsoft's intentions. "Their work on open source has inspired us, the success of the Minecraft and LinkedIn acquisitions has shown us they are serious about growing new businesses well, and the growth of Azure has proven they are an innovative development platform," Wanstrath said.

Wanstrath will become a technical fellow at Microsoft once the acquisition closes, reporting to Microsoft Cloud + AI Group Executive Vice President Scott Guthrie. Nat Friedman, the founder of Xamarin, which was acquired by Microsoft in 2016, will become CEO of GitHub, also reporting to Guthrie.

Posted by Scott Bekker on June 04, 20180 comments

Microsoft announced its latest companywide reorg back in March, but with just a month to go until the end of its fiscal year, its executive roster is still in flux.

In a memo dated March 29, Microsoft CEO Satya Nadella unveiled a major reorganization that included the departure of Terry Myerson, head of the Windows and Devices Group and longtime Microsoft veteran. Myerson was to stay at Microsoft for a while to help with the transition.

The reorg was widely viewed as Nadella demoting Windows, the longtime centerpiece of Microsoft's strategy, to further emphasize artificial intelligence (AI), cloud computing and mixed reality.

To execute on the strategy, Nadella formed two new engineering units. One was Experiences & Devices, led by Corporate Vice President Rajesh Jha. The other was Cloud + AI Platform, led by Scott Guthrie, head of Microsoft Cloud and Enterprise.

All About Microsoft's Mary Jo Foley reported Thursday that the executive moves, even among those specifically named as continuing their positions in the March memo, are not finished.

Kudo Tsunoda is out as corporate vice president for Next Gen Experiences, Foley reported. Tsunoda had reported to Jha and was focused on mixed reality, 3-D, story remix, photos, HoloLens and other related projects. Foley reported that Tsunoda is looking for another role inside the company and the team has been disbanded and moved to other places.

Several teams in other areas are also being shifted around. Some teams on Executive Vice President Jason Zander's Azure and Windows engineering organization are moving into Jha's unit, and the design team and Windows Insider program are moving from Windows engineering into Corporate Vice President Joe Belfiore's Windows client experience team, Foley reported.

Posted by Scott Bekker on June 01, 20180 comments

A year after launching a platform based on the idea that Microsoft Azure was both a powerful and challenging platform for IT as a Service (ITaaS), Chicago-based Nerdio is taking its vision for managed service providers (MSPs) and small and medium business (SMB) to another level.

Nerdio for Azure (NFA) formally launched last year as a toolset to help MSPs get their SMB customers up and running on an ITaaS platform based on Azure. NFA included tools for provisioning, managing and optimizing entire environments into the Microsoft public cloud. An NFA-enabled environment could include desktops, as either virtual desktop infrastructure or via Remote Desktop Services (RDS); server instances with automated backup; and Office 365.

With price estimation tools included that allowed what-if forecasting, MSPs using the platform were able to predict what such environments would cost, enabling them to price in their own margins and reduce the possibility of price shocks when the Azure bill came each month from Microsoft. Further, the platform contained automation and orchestration tools for tasks like upsizing line-of-business server virtual machines during work hours and downsizing them at night or on weekends to control Azure costs.

In the interim, the platform has attracted dozens of partners and Nerdio inked a key strategic partnership earlier this year with SherWeb, one of Microsoft's Indirect Providers in the Cloud Solution Provider (CSP) program. Nerdio does not handle licensing of Azure or Office 365, so partners need to work with Microsoft directly or with a provider like SherWeb to sell the subscriptions to customers.

Yet a key obstacle to MSP adoption is the full-stack approach of NFA. Not all -- and in fact probably relatively few -- MSPs and SMBs are ready to throw all of their infrastructure up into the Azure cloud just yet, Nerdio CEO Vadim Vladimirskiy said in an interview on Tuesday.

"We believe, and I think most of our partners believe, that they will have their IT in the cloud at a certain point in the future. All at once? Probably not. What we're working on now is to simplify that process," Vladimirskiy said.

While Nerdio is a forward-thinking company that has been providing private cloud-based, full IT stack solutions to its own customers for years, the company recognizes that most partners and their customers are interested in smaller steps initially.

Looking over the technology platform Nerdio has built, executives there believe their platform has value for more limited Azure implementations run by MSPs on behalf of both SMBs and even enterprises. While the scope of the stack is smaller, the market opportunity could be many times larger.

"We believe, and I think most of our partners believe, that they will have their IT in the cloud at a certain point in the future. All at once? Probably not. What we're working on now is to simplify that process."

Vadim Vladimirskiy, Nerdio CEO

"Because not all organizations are necessarily ready to go full IT stack in Azure, we wanted to make it as easy as possible for more MSPs to address a wider range of customer use cases, and therefore capture more business through Azure," Vladimirskiy said.

What Nerdio launched this week are four new "use cases" for NFA. Running from the least comprehensive to the most, the offerings are Servers, Azure Hosted RDS, Desktop as a Service (DaaS) and ITaaS. The last one, ITaaS, is basically the original, full-stack NFA offering.

The use cases are defined as high-level guides for MSPs, complete with a sample deployment, simple implementation steps within NFA and customer pricing recommendations.

NFA's Servers use case could be a logical place to start for MSPs looking to move some workloads into Azure without building up a lot of Azure expertise. The use case handles extending the existing network and Active Directory into Azure, migrating line-of-business applications and database servers, managing server infrastructure in Azure and managing backups in Azure.

"We make the creation of servers a trivial thing," Vladimirskiy said. One other point of interest around that server management use case is a pricing loophole. Because NFA is priced per-desktop, per-month, MSPs that try out the Servers use case won't see a bill from Nerdio.

While much of the appeal for NFA is within the SMB market, the Azure Hosted RDS use case comes in response to enterprise demand. Enterprise environments are generally too complicated to roll completely into a single ITaaS solution, but many organizations are looking for help with RDS. "They didn't want to be constantly updating their RDS environment. Also, the datacenter is typically not ideally situated for the bulk of their users. Azure is a natural deployment because you can place the right amount of resources next to the right user groups," Vladimirskiy said.

The DaaS use case is for organizations that are looking for Windows desktop access from any connected device but that don't necessarily want the rest of the stack.

Finally, the ITaaS use case has everything that NFA can manage, including servers, desktops and backup from Azure, and security, e-mail, collaboration and file storage from Office 365.

According to Nerdio, the use cases resulted from MSP feedback, and the company will continue to look at additional use cases. Some current candidates include file sharing-only or messaging-only scenarios.

Posted by Scott Bekker on May 29, 20180 comments

Managed service providers (MSPs) who manage small office/home office (SOHO) routers and network-attached storage (NAS) devices for SMB customers are facing a major new headache in the destructive malware dubbed VPNFilter that has spread to an estimated 500,000 devices in 54 countries.

Cisco Talos Intelligence Group, which conducts broad industry research for vulnerabilities beyond just Cisco hardware, on Wednesday called on owners of the devices and ISPs who provide routers to customers to reboot the devices to their factory settings, a temporary measure that provides only a limited fix for the issue.

Due to the sophistication of VPNFilter and growing concern over the potential for an imminent attack leveraging the malware, the warning was released before research on the flaw and remediation for the problems were complete. MSPs will need to monitor security research organizations and vendor contacts for ongoing updates in the coming days and weeks.

Talos said VPNFilter has been found on routers manufactured by Linksys, MikroTik, NETGEAR and TP-Link, as well as NAS devices made by QNAP. No Cisco devices, or devices from other manufacturers, have been found to be infected yet.

However, in a lengthy blog post on the issue, Talos said it assesses with high confidence that its list of affected devices is incomplete. "Due to the potential for destructive action by the threat actor, we recommend out of an abundance of caution that these actions be taken for all SOHO or NAS devices, whether or not they are known to be affected by this threat," the post stated.

While they have been tracking the malware for a few months, Talos researchers accelerated public disclosure plans over concerns that efforts to spread the multistage modular malware platform had accelerated this month. The month of May brought port scans indicative of attempts to infect additional MikroTik and QNAP devices in more than 100 countries, further evidence of code overlap between VPNFilter and the BlackEnergy malware, which was identified in previous attacks against devices in Ukraine, and sharp spikes in infection activity, especially in Ukraine.

The precise exploitation route has not yet been identified, although Talos does not believe any zero-day flaws are involved. The company said known vulnerabilities in the affected devices provide sufficient avenues for infection.

The malware itself appears to be both sophisticated and versatile. Talos described it as having three stages. The first stage is designed to gain a foothold on the system and persists despite a reboot, meaning the current workaround cannot wipe out that portion of the threat. The first stage also features redundant mechanisms to connect to a command-and-control (C2) server.

That connection causes the infected device to download the Stage 2 malware, which does not persist after a reboot. Capabilities of the second stage include file collection, command execution, data exfiltration, device management and, in some versions, self destruction. The self-destruct capability is particularly nasty in that it overwrites part of the device firmware and then causes a reboot, making the device unusable.

"The destructive capability particularly concerns us. This shows that the actor is willing to burn users' devices to cover up their tracks, going much further than simply removing traces of the malware. If it suited their goals, this command could be executed on a broad scale, potentially rendering hundreds of thousands of devices unusable, disabling internet access for hundreds of thousands of victims worldwide or in a focused region where it suited the actor's purposes," the blog post stated.

Even versions of the Stage 2 malware without the self-destruct capability would be in danger in a mass-destruction attack, given the command execution capability of the base-level malware.

A third stage found in some devices consists of modules that plug into the Stage 2 malware. One module discovered so far includes a packet sniffer capable of stealing Web site credentials and monitoring Modbus SCADA protocols. Another module is designed to allow the Stage 2 malware to make connections over Tor.

The Talos blog post includes links to Snort rules for detecting VPNFilter and for protecting against known vulnerabilities in the affected devices, as well as anti-virus signatures for VPNFilter.

Posted by Scott Bekker on May 24, 20180 comments

Any independent software vendor (ISV) looking for growth opportunities in the enterprise needs to take a hard look at artificial intelligence (AI) right now, industry analysts suggest. And for the next few years, at least, the more niche the solution, the better.

"One of the biggest aggregate sources for AI-enhanced products and services acquired by enterprises between 2017 and 2022 will be niche solutions that address one need very well," said John-David Lovelock, research vice president at Gartner, in a statement. "Business executives will drive investment in these products, sourced from thousands of narrowly focused, specialist suppliers with specific AI-enhanced applications."

Lovelock's comments come as part of a Gartner study released in April that projected huge growth for AI over the next few years. Looking at the global business value derived from AI, Gartner projects a total of $1.2 trillion in 2018, which is an increase of 70 percent from 2017. Gartner expects that growth rate to flatten a bit over the next four years, but remain in the double digits with a projected business value of $3.9 trillion in 2022.

Far from representing a reduction in the opportunity, Gartner presents the flattening as more of a pause. Early growth will be fueled by AI in customer experience applications that drive customer growth and retention, with further AI-driven business value coming from using AI to reduce costs, Gartner expects. It's after that, starting in about 2021, that AI will truly change the playing field.

"New revenue will become the dominant source as companies uncover business value in using AI to increase sales of existing products and services, as well as to discover opportunities for new products and services," Lovelock said. "Thus, in the long run, the business value of AI will be about new revenue possibilities."

Some of those long-tail technologies include deep neural networks for data mining, pattern recognition across huge datasets, and decision automation systems that use AI to automate tasks or optimize business processes.

Nearer-term, a lot of the niche ISV opportunities exist in the rush to create virtual agents and chatbots across the enterprise for reducing costs, with agents accounting for nearly half of business value in 2018 in Gartner's model.

Analysts at IDC also see an ISV gold rush in AI. "Interest and awareness of AI is at a fever pitch," said David Schubmehl, a cognitive/AI systems research director at IDC, in a statement in March. "IDC has estimated that by 2019, 40% of digital transformation initiatives will use AI services and by 2021, 75% of enterprise applications will use AI."

While Schubmehl said every industry and every organization should be evaluating AI for business process and go-to-market efficiencies, IDC identifies several verticals where the action is the most intense.

In retail, IDC is looking for firms to spend on the order of $3.4 billion on AI solutions, including automated customer service agents, expert shopping advisers and product recommendations.

The banking industry was an early leader, but is passing the torch to retail this year, IDC's forecasts show. That financial sector spending is set to hit about $3.3 billion and has an emphasis on automated threat intelligence and prevention systems, fraud analysis and investigation, program advisers and recommendation systems.

Other high-spending verticals for 2018 include discrete manufacturing ($2 billion) and health care providers ($1.7 billion).

While there's a Wild West aspect to the opportunities, with many potentially high-impact applications still in the planning stages or even unimagined, IDC made some projections for top AI use cases through 2021. On top, by compound annual growth rate project was public safety and emergency response, which IDC assigned a 75 percent CAGR. Rounding out the top five were pharmaceutical research and discovery, expert shopping advisers and product recommendations, intelligent processing automation, and sales process recommendation.

Posted by Scott Bekker on May 22, 20180 comments

Microsoft this month is informing partners that requirements and costs to participate in direct billing for Cloud Solution Providers (CSPs) will be increasing, starting this summer, and that revenue requirements are a likely next step in the evolution of the program.

In an e-mail sent to direct CSPs on May 10, Microsoft introduced two new direct-bill requirements that partners must meet before their next enrollment period after Aug. 31. According to the e-mail, which was confirmed as authentic by a Microsoft spokesperson, direct CSPs must now purchase a support plan from Microsoft and demonstrate a few key capabilities.

The support plan options are Microsoft Advanced Support for Partners or Microsoft Premier Support for Partners. The Advanced Support plan will cost U.S. partners $15,000 a year and provides break/fix problem resolution, promised response times of less than an hour for critical issues, and the ability to manage support incidents on a customer's behalf. Premier Support is a more expensive and higher-end offering that is customizable and can cover multiple geographies.

There are also two key capability requirements. One is that partners provide at least one managed service, intellectual property service or customer solution application. The other is that the partner must demonstrate a billing and provisioning infrastructure.

The e-mail also suggested partners should expect CSP-related revenue requirements later. "While there are no specific performance targets associated with these updates, your performance will be considered as a key success component in the future," the e-mail stated. Given that the effective start of the program is Sept. 1, which is partway into Microsoft Fiscal Year 2019, it seems likely that any revenue requirements wouldn't take effect until FY 2020, which begins July 1, 2019.

Microsoft declined to make a partner executive available for an interview. The company did provide an e-mailed statement in which it presented the changes as aligning the CSP direct-bill enrollment requirements with the needs and priorities of customers.

"Cloud enablement services are a critical element of Advanced Support for Partners, making this offering essential to help grow cloud businesses and achieve more active, satisfied customers," the statement said, highlighting a partner-focused element of the lower-tier support package.

Microsoft also presented the rollout of the program as plenty of time for partners to get ready, with partners whose re-enrollment date falls prior to Aug. 31 having 15 months to prepare. "As always, Microsoft provides a long runway to meet any significant changes to requirements which may impact partner status," the statement said.

In its statement, Microsoft also referenced the increased investment and emphasis in the last two years on its network of indirect providers in the CSP program, those partners who sit between Microsoft and about 90 percent of the company's CSP partners, known as indirect resellers. In the United States, there are more than a dozen indirect providers in the CSP program, including Ingram Micro, Tech Data, Synnex, SherWeb, AppRiver and others. Direct CSP partners only make up about 10 percent of CSP partners, company executives have said. "Microsoft's network of indirect CSP partners are also investing in solutions and support to bring additional value to partners worldwide," the Microsoft statement said.

One partner who takes issue with Microsoft's claim that it's providing a long runway is Joel Pippin, president and CEO of Secure Network Administration Inc. in Durham, N.C. A longtime Intermedia and Rackspace partner, Secure Network Administration made the cutover to being a Microsoft CSP enrolled in direct billing about a year ago when Pippin felt the partner program and the Microsoft products had reached sufficient maturity.

Now Pippin fears his renewal will fall right in the earliest wave of the Sept. 1 program, meaning the new requirements will hit his company within about three months.

"As a business owner, I feel cheated. If they were saying, 'We're going to bill you $3,000 or $4,000 a year,' OK. But $15,000? Now Microsoft says pay to play. If they had said pay to play upfront, we would have said we'll stay with Rackspace," Pippin said.

Pippin speculates that Microsoft has calculated the requirement partially in an effort to reduce the number of direct CSPs and herd smaller companies like his into becoming indirect CSPs.

"They're going, 'Ah, we've got too many of these, we've got to weed them out.' They're smart, they probably know exactly where the weed line is in the next couple of years," he said.

For Ric Opal, a vice president at SWC Technology Partners, a large Microsoft partner with a direct billing CSP relationship, the raised requirements and support expenses won't be much more than a blip.

"Unquestionably, customers have to get value from the partner channel. Those two [support agreement] vehicles afford excellent support mechanisms for partners to use if they understand how to amortize that cost over the install base," Opal said. "The intent of the CSP program is to allow partners to be creative with their solutions. I see what they're doing, and it kind of lines up with the intent of the program."

Another group of partners who are unfazed about the immediate set of changes are those who have come to the CSP program from the Dynamics and business applications side, where buying support agreements from Microsoft is a longstanding programmatic requirement.

Julie Lathrop, director of operations at Edgewater Fullscope in Alpharetta, Ga., says that company has maintained support agreements with Microsoft since their partnership started in 2001, and throughout the two years it has acted as a direct CSP. "The changes will not impact us as we already have and use both support offerings with our customers very successfully," Lathrop said in an e-mail interview.

Lathrop says an upside is that any partner involved in the program will have access to Microsoft support beyond the baseline Microsoft Partner Network (MPN) benefit support if an issue arises that the partner can't solve. Indeed, the requirement to spin up a first-line support operation had already been an obstacle to many partners considering enrolling as direct CSPs.

To Lathrop, Premier Support is also an opportunity for direct CSP partners. "Premier was always offered to the larger customers or to customers that could afford it. Many small customers could not take advantage of it and had to take a lower level of support. Now with the partners being able to offer Premier to their customers, the smaller ones can also benefit from the same high quality of support," she said.

She added that Fullscope has built an internal support center and leverages Microsoft to backfill the complex issues: "Our customers expect us to have an outstanding working relationship with Microsoft and, in fact, it is part of our offerings during our sales process. We let our customers know that we can provide them with Tier 1 support and, if necessary, we can escalate it to Microsoft. Great selling point."

Another Microsoft business applications-side partner enrolled as a direct CSP is BroadPoint Technologies in Bethesda, Md.

Andy Gordon, vice president of business development and partnerships at BroadPoint, says the company already maintains an Advanced Support agreement with Microsoft, and is seeing strong gains as a direct CSP partner.

"Through the CSP program, Microsoft has allowed us to be the front line and be the invoicing partner," said Gordon, adding that the company has more than doubled its percentage of recurring revenues by leveraging CSP. "We're slowly but surely turning a corner, and the CSP program has really helped with that."

Given those gains, Gordon says BroadPoint is ready to handle administrative, cost and other back-end changes to the program: "Whatever we have to do, we're on board."

Here's the full text of Microsoft's e-mail to partners:

New CSP direct bill requirements begin August 31, 2018

Digital transformation is evolving the way you and your customers do business. To keep pace and help you make the best decisions for your business, we've updated enrollment requirements for direct bill partners in the Cloud Solution Provider program.

Our goal is to ensure you enjoy steady business growth. While there are no specific performance targets associated with these updates, your performance will be considered as a key success component in the future.

As a valued Microsoft partner, please review and prepare to meet the following direct bill requirements that will go into effect by your next enrollment period after August 31, 2018:

Purchase a Microsoft support plan

To help you meet your customers' specific support needs, we offer two plans:

• Microsoft Advanced Support for Partners provides break/fix problem resolution, response times of less than one hour for critical issues, and the ability to manage support incidents on your customers' behalf.

• Microsoft Premier Support for Partners provides comprehensive support services including prioritized response times, a designated account manager, training programs, and more.

Demonstrate key capabilities

• Provide at least one managed service, IP service, or customer solution application.

• Enable billing and provisioning infrastructure.

If you are unable to meet these new requirements for any reason, you can continue to transact and sell to your customers through an indirect provider in your area.

Here's the full text of Microsoft's e-mailed statement to RCP:

We are updating our Cloud Solution Provider (CSP) direct bill enrollment requirements to ensure our partners are positioned to address the growing customer demand for cloud solutions. Aligning our CSP program to meet the needs and priorities of customers, particularly around cloud usage, enablement, and support, creates more targeted opportunities for partners, and further enables partners to unlock the digital transformation opportunity for customers. Cloud enablement services are a critical element of Advanced Support for Partners, making this offering essential to help grow cloud businesses and achieve more active, satisfied customers. As always, Microsoft provides a long runway to meet any significant changes to requirements which may impact partner status. Microsoft's network of indirect CSP partners are also investing in solutions and support to bring additional value to partners worldwide.

Posted by Scott Bekker on May 17, 20180 comments

Aparavi, a startup in the crowded cloud backup space, this week released a solution that the Southern California company's executives believe will appeal to the second-generation of cloud storage users, who have experienced some of the pain points in the cloud and have more sophisticated requirements.

Called Active Archive, the SaaS-based platform's design goals include archiving unstructured data, minimizing storage requirements and promoting independence for customers among cloud platforms with several features designed to smooth migration from one storage cloud to another.

A central motivation behind the Aparavi approach is that backup of unstructured data is growing at rapid rates but that much of that data doesn't actually need to be retained in the public cloud.

"You don't need more backup. You need a niche tool built for the long haul," said Jon Calmes, vice president of business development for the Santa Monica, Calif.-based company.

The platform combines a SaaS-based console and a local appliance built with Aparavi software that organizations use to control backups from their local data source to a cloud target, set back-up and retention policies, and search for backed-up files across clouds. Supported public clouds include Amazon Web Services (AWS), Microsoft Azure, Google Cloud, Wasabi and IBM Cloud. The platform also backs up to Caringo, Cloudian and Scality on-premises private cloud platforms.

The company allows for optional local snapshots at the source, called checkpoints, but depends on file-based snapshots on the appliance, which are then backed up to the cloud of the customer's choice. By taking a file-based -- rather than an image-based -- approach, Aparavi can provide tools for users to control their cloud storage environment, Calmes said.

Users are able to classify data and set retention policies to allow certain data to be deleted after a specified amount of time or to tag files as "legal" or "confidential." Tagging data as "personal identity" using that capability can help an organization protect that data to meet the European Union's General Data Protection Regulation (GDPR) requirements.

Organizations can search for data based on metadata, such as creation date or file name, or using full-text content search. A capability Aparavi calls "data pruning" automatically removes data and file increments based on retention policies to help organizations keep cloud backup sizes under control.

Founded in 2016, Aparavi came out of stealth mode in October 2017. One of the features added since a test version released last fall is support for multiple clouds. "Multi-cloud is often a misnomer," Calmes said. "Having the ability to switch at any time without getting pummelled with egress fees is a challenge."

Calmes said customers, or managed service providers (MSPs) working on their behalf, will be able to use Aparavi's capabilities in combination to help with a migration from one public cloud to another. "If you're an MSP using Amazon S3 in Glacier and you want to move to Azure and the archive tier, for example, what Aparavi allows you to do is immediately stop the flow of data into that cloud," Calmes said.

Aparavi expects to work with multiple types of partners, from MSPs to service providers to OEMs to systems integrators, to reach the midmarket and enterprise customers that it expects will find its capabilities most appealing. Calmes said the company will roll out a more detailed partner program later this year.

Posted by Scott Bekker on May 16, 20180 comments

Veeam Software executives urged partners to go "all in" with the backup and availability specialist as the company focuses on a dramatically expanded portfolio of solutions from on-premises agents to public cloud this week at its VeeamOn 2018 conference in Chicago.

"Customers are making the decision to move on from their legacy backup products," said Kevin Rooney, vice president of Americas Partner Sales, in a keynote for the partner general session on Monday. Rooney said the key opportunity is from now through the next 12 to 18 months for Veeam's 55,000 partners, who account for 100 percent of Veeam sales.

Rooney said it's important to understand where those customers are in terms of their legacy providers. "They're looking for the top end of the technology," Rooney said.

Kevin Rooney, Veeam's vice president of Americas Partner Sales, during his Monday keynote. (Source: Veeam)

Kevin Rooney, Veeam's vice president of Americas Partner Sales, during his Monday keynote. (Source: Veeam)

At VeeamOn, the company is rolling out a new branding to reach a larger total addressable market. The company is moving from its recent tagline of "availability for the always-on enterprise" to "intelligent data management for the hyper-available enterprise."

Co-CEO and President Peter McKay walked partners through a simplified Veeam history in his keynote, saying the company was about virtual machine backup from its founding in 2006, recovery in 2010, availability last year, and hyper-availability now. He defined hyper-availability as the need to protect and make available data that is critical to the business, growing exponentially and sprawling across locations that include physical datacenters, virtual datacenters, public clouds and SaaS applications.

Ratmir Timashev, Veeam's co-founder and senior vice president for marketing and corporate development, said the current battle in the industry is to dominate the multi-cloud, that portion of the sprawling infrastructure that includes providing availability for services like Amazon Web Services (AWS), Microsoft Azure, Google Cloud and IBM Cloud. Timashev said the company's acquisition earlier this year of N2WS, an AWS backup and recovery specialist, was a key component of that strategy, and McKay said the company would rely on a mix of of internal development and acquisitions to add coverage of additional cloud platforms and SaaS applications.

Rooney encouraged partners to take advantage of the new cloud and physical workload opportunities within Veeam's platform. "One of the knocks against the Veeam for a while [from competitors] was, 'Hey, they're virtual-only.' That's off the table. The agents we should be selling across the board," Rooney said in reference to new agents for IBM AIX and Oracle Solaris operating systems that Veeam unveiled last November.

Rooney also pointed partners to Veeam Backup for Microsoft Office 365. "A lot of customers don't realize that Office 365 is not inherently backed up," Rooney said.

During a media breakfast on Tuesday morning, Vice President of Product Strategy Danny Allan detailed Veeam's momentum around the Office 365 product.

"There are 29,000 organizations using backup for Office 365 today. That represents 2.7 million mailboxes," he said. The 1.0 version released last year protected mailboxes. A version 1.5 added multi-tenant capabilities aimed at Veeam Cloud & Service Providers (VCSPs). The company released a beta last week for a 2.0 version with support for OneDrive and SharePoint. That version is targeted for general availability in the second quarter, Allan said.

Those sales are part of a broader growth story for Veeam. Last month, Veeam announced that the first quarter of 2018 was its 39th straight quarter of record bookings growth. The company claimed 21 percent growth year-over-year and said it was on track to become a $1 billion company (by revenues) in 2018. In a graphic shown to partners earlier in the day, Veeam told partners it was a $200 million company in 2012, was on track to hit $1.1 billion in 2018, and was aiming for $1.5 billion in 2020.

Senior executives assured partners that Veeam was in it for the long haul with a 10-year planning horizon and wasn't looking to sell the company or go public.

"What are the reasons for us to sell? We are fast-growing, we have a great market. There is not a single reason. And we don't have venture capitalists. They don't need the exits, they are not pushing us for the exits," Timashev said.

Posted by Scott Bekker on May 15, 20180 comments