News

Microsoft-Backed Report Makes Business Case for 'Responsible AI'

- By David Ramel

- January 10, 2025

A new report by research firm IDC commissioned by Microsoft finds "responsible AI" initiatives are more than feel-good marketing; they make good business sense, too.

Among the over 90 percent of organizations that use AI technologies, the whitepaper found that those using responsible AI solutions report myriad benefits beyond operational efficiency and improved customer experiences. Those benefits include "improved data privacy, enhanced customer experience, confident business decisions, and strengthened brand reputation and trust," said Microsoft executive Sarah Bird in a blog post last week. "These solutions are built with tools and methodologies to identify, assess, and mitigate potential risks throughout their development and deployment."

A responsible organization, the report says, includes these foundational elements:

- Core values and governance: It defines and articulates responsible AI (RAI) mission and principles, supported by the C-suite, while establishing a clear governance structure across the organization that builds confidence and trust in AI technologies.

- Risk management and compliance: It strengthens compliance with stated principles and current laws and regulations while monitoring future ones and develops policies to mitigate risk and operationalize those policies through a risk management framework with regular reporting and monitoring.

- Technologies: It uses tools and techniques to support principles such as fairness, explainability, robustness, accountability, and privacy and builds these into AI systems and platforms.

- Workforce: It empowers leadership to elevate RAI as a critical business imperative and provides all employees with training to give them a clear understanding of responsible AI principles and how to translate these into actions. Training the broader workforce is paramount for ensuring RAI adoption.

A few Microsoft-provided highlights of the report, meanwhile, include:

- More than 30% of respondents noted that the lack of governance and risk management solutions is the top barrier to adopting and scaling AI.

[Click on image for larger view.] Top Barriers to AI Adoption (source: IDC).

[Click on image for larger view.] Top Barriers to AI Adoption (source: IDC).

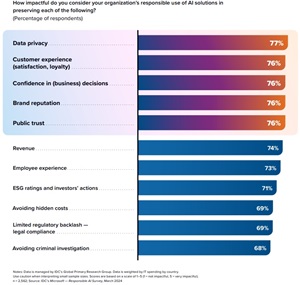

- More than 75% of respondents who use responsible AI solutions reported improvements in data privacy, customer experience, confident business decisions, brand reputation, and trust.

[Click on image for larger view.] Level of Impact of Organization's Responsible Use of AI Solutions (source: IDC).

[Click on image for larger view.] Level of Impact of Organization's Responsible Use of AI Solutions (source: IDC).

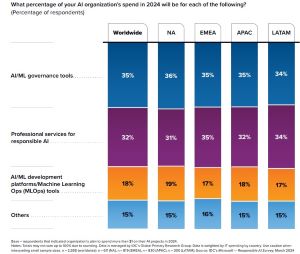

- Organizations are increasingly investing in AI and machine learning governance tools and professional services for responsible AI, with 35% of AI organization spend in 2024 allocated to AI and machine learning governance tools and 32% to professional services.

[Click on image for larger view.] AI Organization's Budget Allocation, 2024 (source: IDC).

[Click on image for larger view.] AI Organization's Budget Allocation, 2024 (source: IDC).

The report also provides advice to organizations seeking to implement responsible AI. They should establish clear principles guiding AI development, avoid reinforcing unfair biases, and prioritize safety by testing systems in controlled environments and monitoring post-deployment. They should form diverse AI Governance Committees to oversee responsible use, align internal and external policies with legal and ethical standards, and promote transparency and explainability. Regular AI audits, privacy protection, and diverse testing criteria are essential, alongside ongoing employee training in responsible AI practices. Organizations should adopt end-to-end governance, encompassing infrastructure, model, application, and end-user layers, to address risks and compliance. Adapting to regulations like the EU AI Act, which imposes strict requirements for high-risk applications, is crucial. Enterprises should also enhance governance over generative AI systems by controlling user interactions, adding safeguards, and fostering safe exploration to balance risk mitigation with the benefits of AI tools.

Microsoft summarized all that and provided some links for more information for its users, especially those on the Azure cloud, which sponsored the survey report:

- Establish AI principles: Commit to developing technology responsibly and establish specific application areas that will not be pursued. Avoid creating or reinforcing unfair bias and build and test for safety. Learn how Microsoft builds and governs AI responsibly.

- Implement AI governance: Establish an AI governance committee with diverse and inclusive representation. Define policies for governing internal and external AI use, promote transparency and explainability, and conduct regular AI audits. Read the Microsoft Transparency Report.

- Prioritize privacy and security: Reinforce privacy and data protection measures in AI operations to safeguard against unauthorized data access and ensure user trust. Learn more about Microsoft's work to implement generative AI across the organization securely and responsibly.

- Invest in AI training: Allocate resources for regular training and workshops on responsible AI practices for the entire workforce, including executive leadership. Visit Microsoft Learn and find courses on generative AI for business leaders, developers, and machine learning professionals.

- Stay abreast of global AI regulations: Keep up-to-date with global AI regulations, such as the EU AI Act, and ensure compliance with emerging requirements. Stay up-to-date with requirements at Microsoft Trust Center.

"By shifting from a reactive AI compliance strategy to the proactive development of mature responsible AI capabilities, organizations will have the foundations in place to adapt as new regulations and guidance emerge," the report said in conclusion. "This way, businesses can focus more on performance and competitive advantage and deliver business value with social and moral responsibility."

More information can be found in an accompanying webinar.

The report used survey data from March 2024.

About the Author

David Ramel is an editor and writer at Converge 360.